Best Quality Management Systems (QMS)

Whether you manufacture medical devices or process food, QMS software helps you meet standards like ISO 9001 and FDA regulations. We’ve reviewed the top platforms focusing on traceability tools, risk management, and industry-specific compliance.

- Configurable, modular design with a variety of add-on modules

- Mobile-first design on uniPoint Web version

- Allows concurrent users rather than named seats

- Flexible deployment options with access on all devices.

- Has 25+ pre-installed modules for advanced functionality.

- Full validation included for life sciences companies.

- Provides in-depth audit trails

- High configurability and customization options

- Ready to use best practices

Quality management tools do more than maintain product quality—they align your processes with industry standards and workflows, from pharmaceuticals to heavy manufacturing.

- uniPoint: Best for Aerospace

- QT9 QMS: Best for Pharmaceuticals

- Octave Reliance: Best for Manufacturing

- Singlepoint: Best No-Code QMS for Manufacturing

- MasterControl: Best for Medical Devices

- Ideagen: Best for Food and Beverage

- Greenlight Guru: Best Integrated Design Controls

- Unifize EQMS: Best for Automotive

- QAD EQMS: Best Supplier Quality Management Tools

- Harrington QMS: Best for ISO Quality Compliance

- Qualio: Best Document Management Tools

- Intellect QMS: Best Risk Management Tools

- ComplianceQuest: Best Salesforce-Native QMS

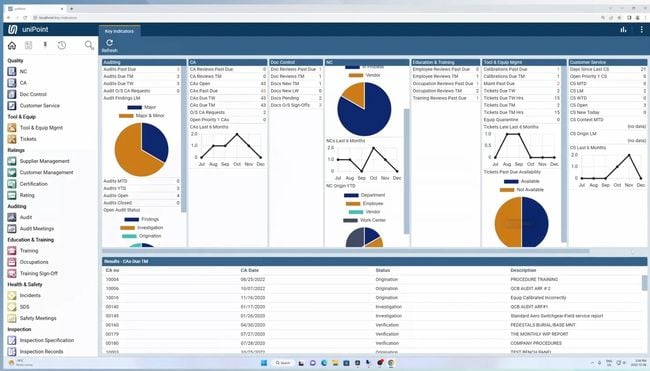

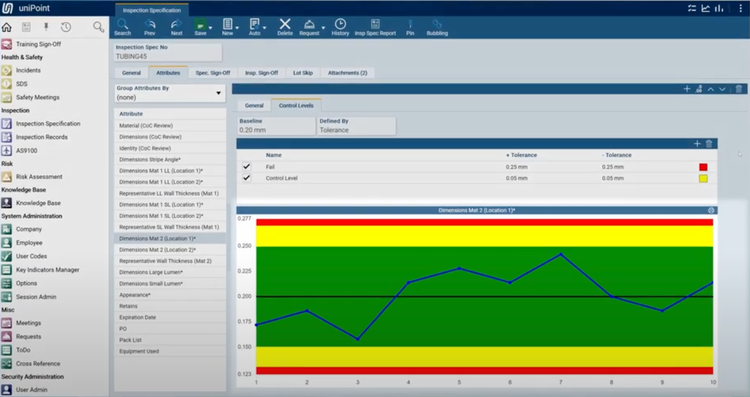

uniPoint - Best for Aerospace

The AS9100 feature in uniPoint integrates with the inspections module to streamline first article inspection (FAI), including auto-generating AS9102 Forms 1, 2, and 3. It pulls data directly from engineering drawings using optical character recognition (OCR) and geometric dimensioning and tolerancing symbology. This provides full traceability and accurate inspection specifications.

uniPoint’s integration with inspection points allows your quality teams to verify incoming materials like high-performance alloys for turbine blades. It also supports efficient quality checks for outgoing assemblies like completed engine modules. If the system detects a non-conformance, it automatically generates an NCR, linking the issue back to the relevant inspection records. This ensures compliance with aerospace standards, minimizes production delays, and supports consistent product quality.

Due to its highly configurable design, this QMS does not have set pricing, though we estimate that it starts at $225/user/month. The system uses a concurrent seat and module-based pricing model, so it’s highly variable based on your company’s needs.

See our full review of uniPoint.

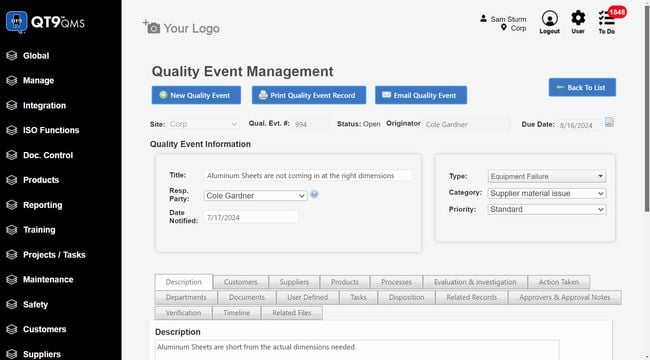

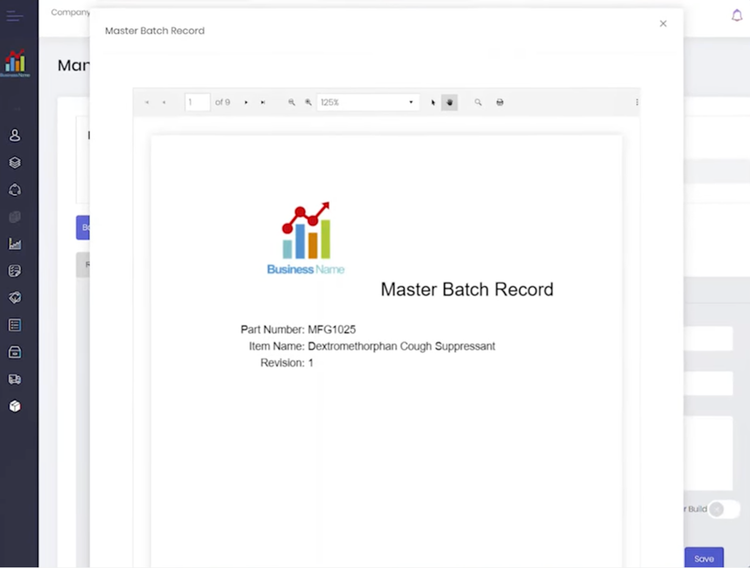

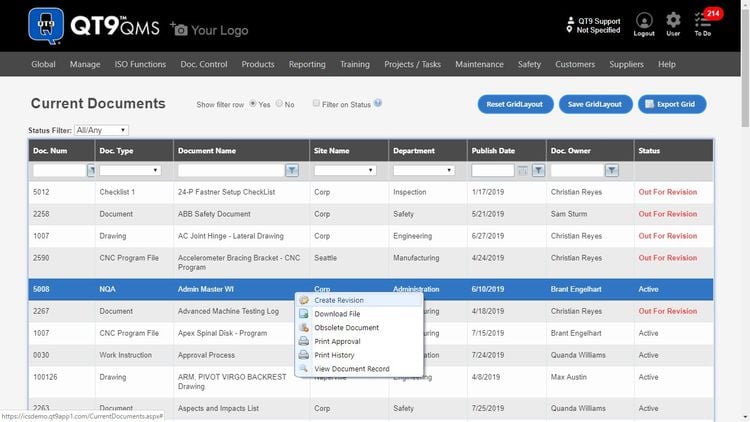

QT9 QMS - Best for Pharmaceuticals

QT9 QMS allows you to create master batch records (MBR) and electronic batch records (EBR) in just a few clicks:

- MBR: A static document prepared before production with formulas and quantities for raw materials and step-by-step manufacturing guidelines; also outlines required equipment and quality control checkpoints to ensure compliance with cGMP standards.

- EBR: Records the production process for a specific pharmaceutical batch, capturing actual material usage and process conditions like temperature; logs real-time equipment performance, QC test results, and operator electronic signatures for FDA 21 CFR Part 11 requirements.

The system connects inspection records directly to jobs, ensuring quality results connect to their corresponding batch. Additionally, the system flags the batch immediately if an issue arises, like a failed QC test for endotoxin levels. This gives your production team time to halt operations, investigate, and resolve the problem before it impacts patient safety.

QT9 QMS integrates label printing into the batch record workflow. Labels auto-populate with details such as lot numbers and expiration dates. These labels meet FDA and GMP requirements by including necessary information like barcodes and product-specific warnings. QT9’s base package starts at $2,200 per concurrent user per year, but the total price will depend on how many user licenses you need. Initial training and implementation costs will also affect final costs.

Get pricing details, pros, and cons in our QT9 QMS review.

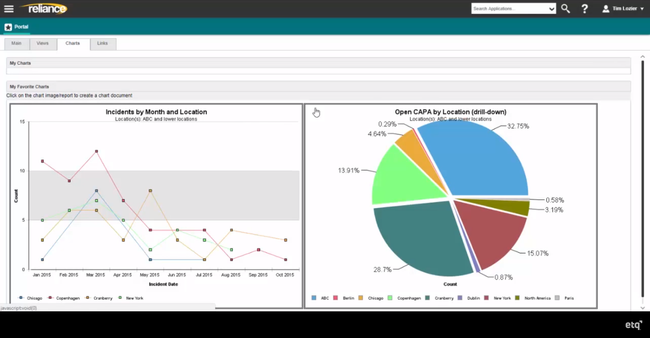

Octave Reliance - Best for Manufacturing

Octave Reliance uses a closed-loop quality process that connects root cause analysis (RCA), non-conformance reporting, and supplier corrective actions in one platform. It syncs with SPC tools and shop floor systems to capture defect data automatically. In manufacturing, where one missed issue can prompt downtime or rework, Octave shifts quality control from a reactive checklist into a proactive process that protects uptime and margins.

Non-conformances trigger built-in workflows, guiding your team through structured RCA tools like 5 Whys and Pareto charts. Octave will prompt them to evaluate the severity and impact using predefined criteria. From there, your quality engineers can create an action plan with clear task assignments. This means fewer delays and faster resolutions, keeping your production schedules on track.

Octave Reliance also extends upstream. If the issue stems from a vendor, the platform launches a SCAR (Supplier Corrective Action Request). Your vendors can access these via a secure portal to address the problem directly, reducing defects in incoming materials and rework costs. Overall, it’s a solid pick for larger manufacturers dealing with diverse supply chains and compliance with standards like ISO 9001.

Find out if Octave Reliance is right for your operations.

Singlepoint - Best No-Code QMS for Manufacturing

Singlepoint QMS is a QMS software for manufacturing teams, built on a no-code, drag-and-drop configuration platform. It lets quality teams build and modify forms, workflows, business rules, and reports without developer support. If your internal processes don’t fit a standard template, such as a custom gated NPI process or a non-standard CAPA workflow, you can build them yourself.

The QMS includes a Visual Navigator that lets administrators create image-based navigation interfaces for shop floor users. Instead of clicking through menus, operators see a visual map of the factory floor and tap into the relevant process documentation. For manufacturers certified to ISO 9001 or IATF 16949, this reduces training time and enables non-technical staff to get up to speed faster.

Pricing starts at $100/user/month for the Core tier. This positions Singlepoint as an affordable QMS document control software that still covers audits, CAPA, and supplier management. The Professional tier adds advanced workflow automation, AI features, and API access at a custom price. Both plans are available on monthly or annual billing.

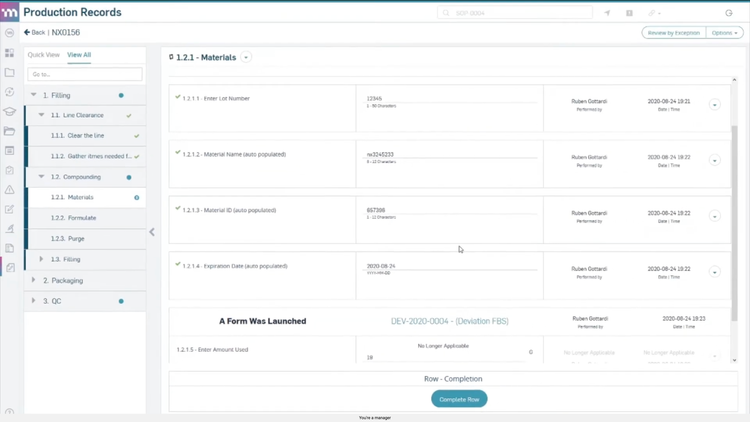

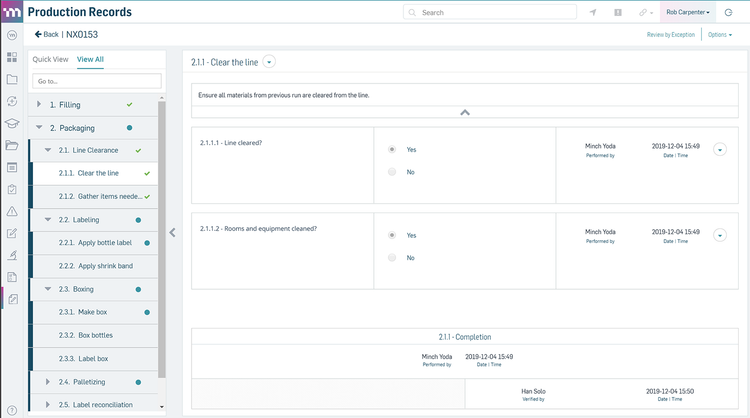

MasterControl - Best for Medical Devices

MasterControl includes an electronic device history record (eDHR) tool that captures live data straight from your equipment. It flags deviations the moment they happen and links them to batch records, streamlining approvals by verifying every routing step is complete and compliant.

MasterControl stores all production and quality data in an FDA-compliant environment, with full support for 21 CFR Part 820 (Quality System Regulation for medical devices). This means time-stamped audit trails, strict access controls, and full traceability for product changes over time. When issues arise, like a potential recall, you can quickly pinpoint affected products and root causes. That way, you can protect patient safety and respond confidently to regulators.

To meet FDA 21 CFR Part 11 requirements, this software also supports electronic signatures, confirming that only authorized staff performed key actions–like approvals, inspections, and verifications. Plus, it links employee training records to day-to-day operations, preventing unqualified personnel from performing regulated tasks.

Though MasterControl does not provide public pricing details, we estimate that its base cost is around $1,000/month. However, the exact cost can vary depending on your desired features and total user count.

View our profile on MasterControl to learn more.

Ideagen - Best for Food and Beverage

Ideagen Quality Management, formerly Qualtrax 1100, offers non-conformance and incident management modules to meet challenges like allergen tracking, temperature deviations, and product recalls. When customer complaints or safety issues arise, the system logs them and assigns them to records. It then links them to workflows to help resolve the problem.

Pre-configured or customizable workflows guide your team step-by-step through the resolution process, ensuring compliance with frameworks like HACCP, FSMA, and the UK Food Safety Act. For example, if a temperature deviation occurs during storage, the system can notify stakeholders, catalog inspection results, and track corrective measures to ensure traceability during recalls.

Additionally, Ideagen supports root-cause analysis by associating related records for broader oversight. For example, if multiple incidents involve a specific ingredient, the system can group and evaluate these records to highlight patterns or trends. The system also maintains full audit trails, allowing your team to attach supporting documents, results, or certificates.

Ideagen includes an industry-specific edition for food and beverage to better comply with standards like BRC and EU GFLR. While the system is flexible for small businesses and large enterprises, its high pricing may deter very small companies. Additionally, some users find the interface outdated and in need of a more streamlined, responsive design.

View our profile on Ideagen Quality Management.

Greenlight Guru - Best Integrated Design Controls

As medical device companies grow, the biggest bottleneck is generally around scaling product development without losing traceability. The integrated design controls in Greenlight Guru ensure that every device is assigned to its own project workspace, which serves as a complete design file.

As you build out risk elements and tests, the system generates your DHF and quietly handles version control, all in the background. User needs, design inputs & outputs, verification activities, and validation tests all live in a linked structure. You can select any requirement and trace it from concept to test results; meanwhile, all upstream and downstream dependencies are automatically updated, providing your engineering and QA teams with a current view of the product.

Greenlight Guru also integrates risk management into its system, mapping hazards and controls in line with ISO 14971. It then ties these back to any design elements they impact. This makes audit prep far more manageable, as you can export a complete risk matrix or DHF with just a few clicks.

Pricing starts at $600/user/month, with packages priced per user. While it doesn’t offer a free version or trial, it’s ideal for smaller companies in the medical device industry. Its laser focus on med-tech means pharma or biotech teams may find the scope too narrow for their needs.

Get pros, cons, and pricing in our Greenlight Guru review.

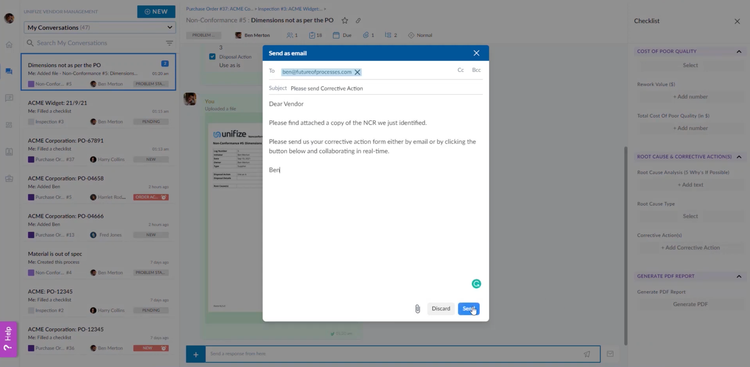

Unifize EQMS - Best for Automotive

Unifize EQMS includes built-in vendor collaboration tools to help address non-conformances in components and comply with quality standards like IATF 16949. For example, it can import purchase orders directly from your ERP system when sourcing parts like alternators or fuel injectors. It then automatically initiates inspection records based on predefined checklists. This ensures critical safety components meet quality criteria before assembly ever begins, reducing costly recalls and warranty claims.

Your vendors can communicate with you through a streamlined interface called Unifize Light in a real-time chat environment without needing full platform access. This allows your suppliers to provide updates, clarify inspection results, or resolve issues in real time, which is essential to maintaining production timelines and reducing downtime.

When an inspection uncovers a non-conformance, Unifize links the issue directly to supplier conversations and corrective action workflows. Any non-conformances are digitized, signed, and sent to vendors for corrective action. These records ensure traceability and record a comprehensive audit trail of supplier interactions.

Unifize’s tiered pricing starts at $50/user/month for up to ten processes. Processes are distinct workflows or tasks within the system, like non-conformance management or inspection records. There’s a free-for-life tier available for smaller operations with up to five processes and users.

Learn more about Unifize EQMS, including pricing details and key features.

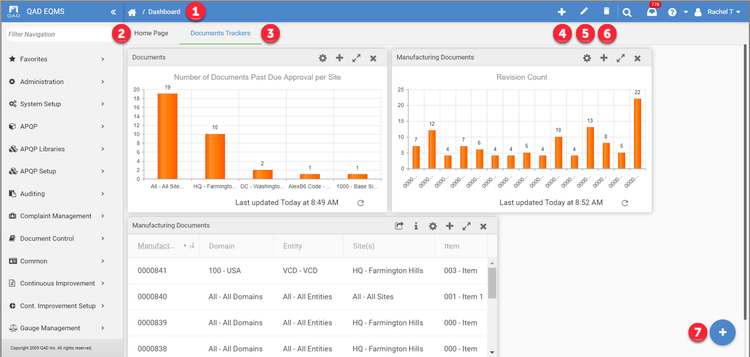

QAD EQMS - Best Supplier Quality Management Tools

QAD EQMS provides a strong supplier quality management module that helps measure vendor performance while resolving supplier-driven risks like defects or cost overruns. This module syncs with other QAD tools like advanced product quality planning (APQP), non-conformance and corrective action, and complaint management, forming a closed-loop system to maintain quality across your supply chain. This ensures that issues like defective shipments are analyzed, resolved, and linked to vendor processes for proactive improvements.

Suppliers can communicate with you directly via self-service portals. You can share important documents, like drawings and standards, and allow your vendors to submit deviation requests or corrective action responses. Built-in root cause analysis tools like Ishikawa diagrams for visual cause mapping make it easier to work together to resolve challenges.

To simplify processes, QAD EQMS auto-calculates chargeback calculations for defective shipments, flagging recurring issues to help vendors adhere to your standards. The system also supports supplier audits, enabling you to document performance with customizable templates and scoring tools. Monitor certifications suppliers need to maintain, like ISO 9001 or IATF 16949; QAD even automates notifications for certification renewals to ensure your vendors stay compliant, helping you avoid quality gaps and ensure smooth operations.

Find out if QAD EQMS is right for your business.

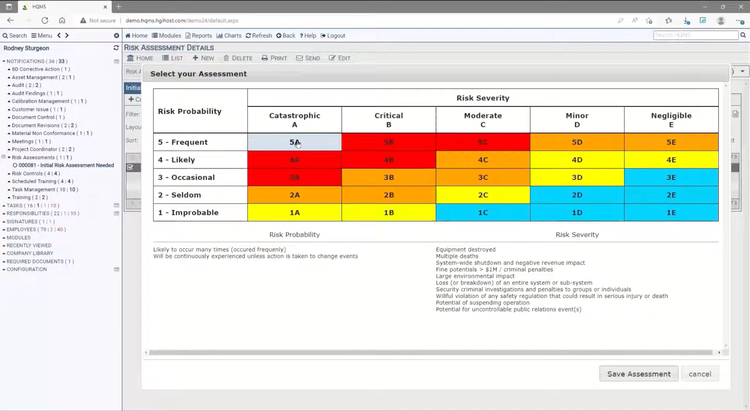

Harrington QMS - Best for ISO Quality Compliance

HQMS is a quality management software designed for enterprises seeking to standardize, control, and enhance their ISO-compliant frameworks. Built by Harrington Group International, the system brings together documentation, CAPA, audits, risk management, and training on a single platform.

While HQMS supports many quality standards, it’s particularly strong at supporting businesses operating under ISO 9001, ISO 13485, and ISO 14001 standards. The system strictly enforces the documentation, review, and correction of quality activities, including Corrective and Preventive Actions (CAPA) and supplier management. This makes it easier for organizations to demonstrate consistency and traceability during ISO reviews and audits. For training and risk management, a core piece of ISO, HQMS links employees’ qualifications and individual risk assessments directly to the tasks they perform, ensuring regulated work is completed only by trained personnel.

HQMS is best for organizations that require compliance with multiple quality standards, and maintaining a consistent traceability process is critical. We have seen successful implementations across the manufacturing, healthcare, and aerospace industries. That said, smaller teams just getting started in quality management might find their enterprise-level quote-based pricing more expensive than other entry-level programs.

Learn more about what industries and applications HQMS supports.

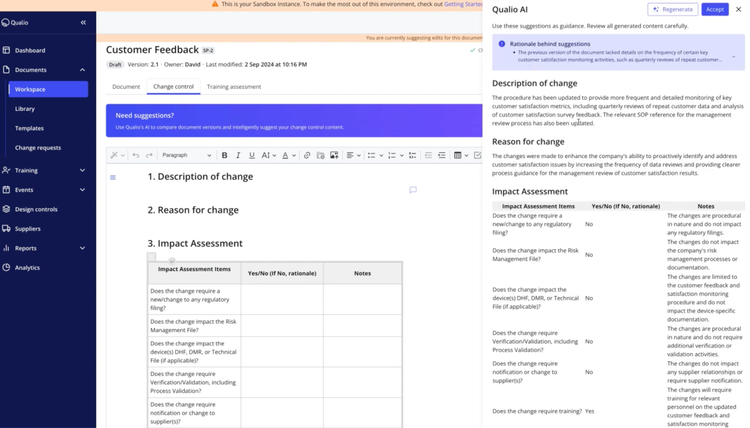

Qualio - Best Document Management Tools

Qualio QMS provides an in-app editor for creating, managing, and editing everything from standard operating procedures (SOPs) to technical drawings. Use the conversion tool to pull legacy files like paper-based blueprints and quality records into your system. Once uploaded, your teams can annotate documents directly within the platform.

By storing all information in a centralized location, Qualio ensures you’re always working with the latest versions of training records, supplier documentation, and policies. The platform supports embedded e-signatures aligning with regulations like FDA 21 CFR Part 11 and EU Annex 11. This feature prevents tampering post-signature, preserving document integrity.

Further, Qualio includes AI-driven change summary generation. As you modify SOPs or other critical records, the AI monitors and summarizes these updates and their significance within seconds. Version tracking archives changes for quick retrieval during audits, and built-in rules format summaries according to ISO and FDA standards.

Qualio starts at $12,000 for the platform, with an additional $3,000 per user per year. The pricing model scales to accommodate growing teams. It suits industries with strict regulatory demands, including medical devices, pharmaceuticals, and biotech.

View our profile on Qualio QMS.

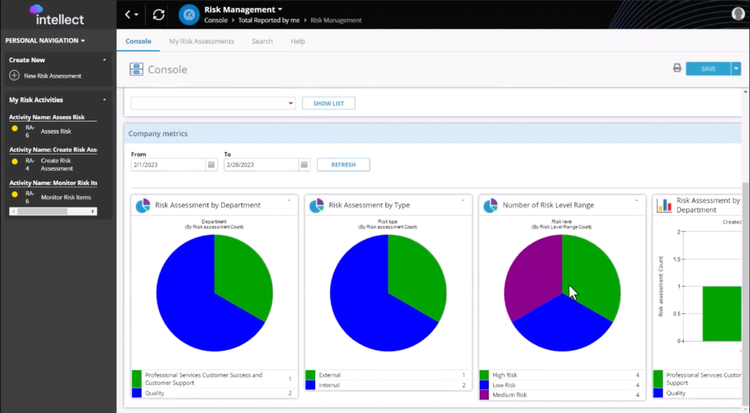

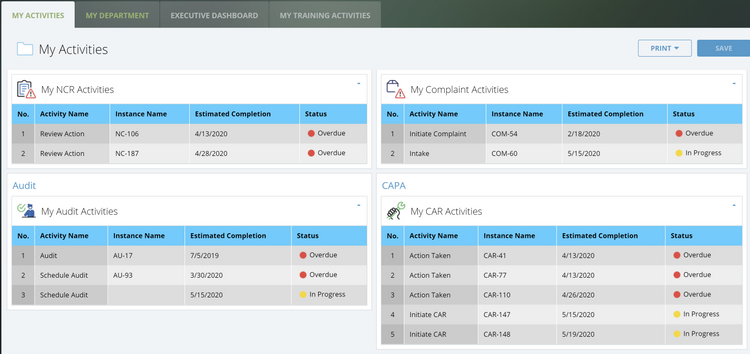

Intellect QMS - Best Risk Management Tools

The risk management system in Intellect QMS covers the entire lifecycle, from issue identification to resolution. You can create new risk items directly in the platform, classifying them as low, medium, or high priority. Alternatively, the software can escalate them automatically from related modules, like NCR, CAPA, or supplier management, when a trigger event occurs, such as a failed quality check. You can use the navigation dashboard from there to track and monitor individual and organizational metrics.

Intellect QMS prioritizes tasks by calculating severity, detection scores, and likelihood of occurrence. This helps you focus on the most pressing challenges. Both manual and automated workflows assign action items to individuals for better accountability. The platform even includes resolution tracking for a comprehensive audit trail of completed tasks.

Customize reports to gain deeper insights into risk trends and resolutions. Intellect includes strong search and navigation tools to locate specific risk assessments, simplifying audits and compliance checks. A user-friendly console lets you track both personal and company-level risks; you’ll always know what’s open, in progress, or resolved.

Intellect QMS best serves medium-sized to large enterprises due to its scalability and advanced feature set; it’s not a good fit for very small companies needing a straightforward, out-of-the-box solution; we recommend QT9 QMS as an alternative.

Learn more about Intellect QMS, including pros, cons, and features.

ComplianceQuest - Best Salesforce-Native QMS

ComplianceQuest is an AI-driven EQMS and EHS platform ideal for organizations already running Salesforce for CRM or other operations. Because it’s built on the platform, it provides a unified data model, native integrations, and shared security architecture.

As a modular system, ComplianceQuest allows you to start with core quality processes and add modules as your needs grow. For example, a medical device startup could begin with CAPA and document control, then layer on EHS and supplier quality as production evolves. Overall, it covers the full EQMS suite: audit management, change control, complaints, non-conformance, and training. ComplianceQuest includes IMDRF codes in its risk management module, simplifying regulatory reporting for med tech teams under ISO 13485 and FDA 21 CFR Part 820.

ComplianceQuest also provides AI- and machine-learning-powered analytics that highlight patterns across quality events. That way, your team can move from reactive resolutions to proactive risk identification. Additionally, the system automatically routes tasks based on qualifications and roles; 21 CFR Part 11-compliant electronic signatures also help manage approval chains without third-party add-ons.

This EQMS software is best suited for mid-sized to large organizations in life sciences, manufacturing, automotive, and aerospace. You can still adopt ComplianceQuest even if you’re not on Salesforce; however, the platform’s greatest value lies in that native integration.

Other Systems We Like

Qualcy eQMS is affordable at $399/month plus $99/admin user. It’s best suited for the pharmaceutical, medical device, and biomedical sectors.

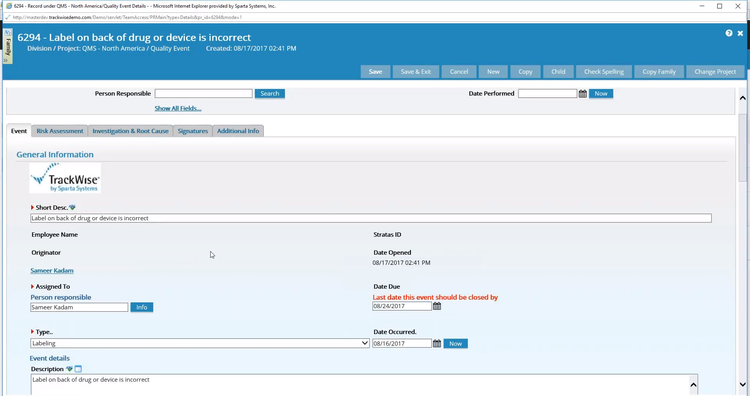

TrackWise includes strong CAPA and audit management for large manufacturers. It uses cross-functional record-keeping to link related records, like audit findings, to corrective actions for a clear audit trail.

Veeva Vault QMS is ideal for life sciences enterprises that need their QMS tightly integrated with clinical, regulatory, and document management, all on Veeva’s unified Vault platform. It’s ideal for large pharma, biotech, and medical device organizations already in the Veeva ecosystem or currently evaluating it.

What is Quality Management System (QMS) Software?

Quality management software is designed to manage and maintain industry-specific, customer-based, and regulatory standards for their products, services, and processes. It combines document control, CAPA, and compliance and audit management to streamline quality-related tasks.

In particular, QMS helps businesses meet the regulatory requirements of ISO and FDA, in addition to policies set by other government and sector-specific agencies.

This helps you standardize processes, increase customer satisfaction, and mitigate financial risks related to quality exceptions.

Read more: What is a Quality Management System (QMS)? - ISO 9001 Processes

What is EQMS?

Enterprise quality management systems (EQMS) have a greater scope than generic QMS solutions. EQMS centralizes and optimizes quality management across an entire organization. This includes:

- Enterprise-wide data visibility: Delivers real-time metrics across organizational processes, including product quality performance, compliance adherence, process efficiency, risk management, and customer satisfaction.

- Scalability: Supports large organizations’ complex workflows and global operations.

- Cross-department integration: Syncs quality management efforts across locations and teams.

Many of these QMS products use the two terms interchangeably.

Read more: What is EQMS?

Industry-Specific Types

Industry-Agnostic

Designed to apply across industries rather than tailored to a single sector’s specific needs. This software provides a framework for managing quality processes, compliance, and performance within an organization, regardless of its field of operation.

Medical

QMS software for medical devices helps ensure products are designed and manufactured in compliance with regulations set by the U.S. Food and Drug Administration (FDA), the European Union’s Medical Device Regulation (MDR), and other international standards like ISO 13485.

Food and Beverage

Verifies the safety and quality of food products from production to distribution. Facilitates compliance with HACCP (Hazard Analysis and Critical Control Points), FDA regulations (including FSMA—Food Safety Modernization Act), and GFSI (Global Food Safety Initiative) benchmarks.

Pharmaceutical

Pharmaceutical QMS software helps drug manufacturers automate quality processes, documentation, and compliance to ensure medicine is safe and effective. It adheres to pharmaceutical regulations like FDA 21 CFR Part 210/211, Part 11 (for electronic records), and Good Manufacturing Practices (GMP).

Automotive

Helps design, develop, test, and manufacture vehicles and their components. It enhances engine management, safety systems, infotainment, and autonomous driving technologies. Automotive QMS aligns with automotive quality standards like IATF 16949.

Aerospace

Utilized in designing, simulating, testing, and maintaining aircraft and spacecraft, including commercial airplanes, military jets, drones, and satellites. It aids in maintaining complex systems and components, ensuring they meet rigorous safety and performance standards. Aerospace QMS ensures compliance with standards like AS9100.

| Problem | Feature | Product | Pricing |

|---|---|---|---|

| Error-prone manual production records. | Automated eDHR and FDA-compliant data storage. | MasterControl Manufacturing Excellence | Estimated $1,000/month. |

| Complex inspections for AS9100 compliance. | AS9100 tool with inspection integration. | uniPoint | Estimated $225/user/month. |

| Ensuring batch quality and compliance. | MBR/EBR with integrated QC data. | QT9 QMS | $2,200 per concurrent user/year. |

| High defect rates in heavy manufacturing. | Closed-loop quality process and SCAR workflows. | Octave Reliance | Contact vendor. |

| Managing non-conformance in food production. | Non-conformance workflows for HACCP compliance. | Ideagen Quality Management | Contact vendor. |

| Vendor issues affecting automotive quality. | Vendor tools with corrective action workflows. | Unifize EQMS | Starts at $50/user/month. |

| Measuring and improving supplier performance. | Supplier quality module with root cause analysis. | QAD EQMS | Contact vendor. |

| Managing critical documents in regulated settings. | In-app document editor with version tracking. | Qualio QMS | $12,000 platform fee + $3,000/user/year. |

| Tracking and resolving quality risks. | Risk lifecycle tools with priority scoring. | Intellect QMS | Contact vendor. |

| Maintaining traceability in medical device design. | Integrated design controls with DHF generation. | Greenlight Guru | $600/user/month. |

Quality Standards Examples

ISO standards include:

| ISO Standard | Meaning |

|---|---|

| ISO 9001 | Sets out the criteria for a quality management system and is the only standard in the family that can be certified (although this is not a requirement). It can be used by any organization, large or small, regardless of its field of activity |

| ISO 13485 | Similar to ISO 9001 but for medical devices. Has a specific requirement for software validation and requires validation reports to verify for the auditor that it will work as intended. Includes requirement for CFR 21 specific electronic signatures |

| ISO 50001 | Energy management standard for addressing the energy consumption and use of an organization |

| ISO 27001 | Information security, similar to ISO 9001 but with a change in focus to data security and integrity vs product quality |

| ISO 45001 | Health and safety, similar to ISO 9001 but with a change in focus to employee safety vs. product quality |

| ISO 14001 | Environmental management, similar to ISO 9001 but with a focus on reducing environmental impact, managing waste, and meeting regulations |

| ISO 17025 | Laboratory testing and calibration |

FDA certifications include:

| FDA | Meaning |

|---|---|

| FDA 21 CFR Part 11 | Electronic records and electronic signatures |

| FDA 21 CFR Part 211 | Drug and pharmaceutical manufacturing |

| FDA 21 CFR Part 820 (now updated to the new FDA QMSR) | Medical device manufacturing and distribution |

Other common examples of quality standards include:

- API Q1/Q2: Standards specific to the oil and gas industry that are similar in nature to ISO 9001. API W2 has the explicit requirement for contingency planning. Both API Q1 and Q2 make the distinction between corrective and preventive action (something ISO 9001 does not)

- ASME: Highly focused on product specifications and work instructions. Little emphasis on standard QMS requirements such as nonconformities and corrective action

- API Design Stamps: Similar to ASME. Components have specific technical requirements to be met. Generally coupled with API Q1/Q2

- FAR Part 46: Federal Acquisition Regulation requirements for quality assurance, specifying contractor responsibilities for compliance, inspections, and corrective actions

- IATF 16949: Automotive quality management standard similar to ISO 9001 with additional requirements

- AS9100: Aerospace quality management standard similar to ISO 9001 with additional requirements

- HACCP: Food safety preventative maintenance

- SQF: Food quality management

Non-ISO Standards

- Statistical Process Control (SPC): Identify product quality issues and process variations to take corrective action before extensive issues occur and improve process performance. May pair well with a QMS but is better used to report on the output of the production process.

- Production Design Lifecycle System (PDLC): Mainly used for showing verification and validation of product design requirements. Focus on the interaction between suppliers, engineering, and test data. Includes production part approval processes (PPaP).

Common Challenges

Implementing QMS software can help solve several different challenges that companies across every industry experience, including:

- Compliance Issues: Maintaining compliance with manual inspections, history tracking, and audits leaves you prone to human error and lack of accountability. A QMS system ensures you adhere to your industry’s regulatory standards to reduce the risk of violation.

- Lack of Standardization: Many businesses struggle to standardize processes company-wide. QMS software lets you create clear operating procedures to ensure consistency and quality.

- Inefficient Document Management: Often, companies lack a central location to manage their documents, leading to lost files or scattered filing systems. QMS systems maintain a document management module to create, approve, distribute, and archive essential quality control files.

Key Features & Benefits

QMS system software offers a wide variety of functionality. Modules often include the following core functions:

| Feature | Description | Benefit |

|---|---|---|

| Quality Control | Set objectives on quality processes related to cycle times, scrap/waste percentages, defect rates, measurement deviations, and durability metrics. | Improves product quality and reduces waste. |

| Risk Management and Analysis | Create what-if scenarios to analyze potential costs related to quality exceptions. Predict failure and service rates and their financial implications. | Allows manufacturers to identify root causes of quality issues and mitigate risks effectively. |

| Corrective Action/Preventive Action (CAPA) | Includes task assignments, reporting, and monitoring to ensure quality deviations are addressed promptly. | Promotes proactive issue resolution and maintains quality standards. |

| Parts Non-conformance | Define measurement standards for parts, track a database of standardized metrics, and integrate monitoring controls with operational equipment. | Ensures parts meet quality specifications and reduce non-conformance rates. |

| Customer Complaint Management | Assist service personnel in quickly resolving customer issues. | Enhances customer satisfaction and loyalty. |

| Audits and Inspections | Define, schedule, and execute regular audits and inspections to improve business processes continuously. | Ensures regulatory compliance and identifies areas for improvement. |

| Document Management | Coordinate data management, facilitate collaboration, and ensure easy access to important quality documentation. | Simplifies compliance and improves team collaboration. |

| Training Management | Ensure employees are trained and certified in key areas to meet compliance standards. | Ensures workforce readiness and maintains regulatory compliance. |

| Reporting and Business Intelligence | Reference KPIs and dashboards to turn quality management data into actionable insights. | Provides data-driven decision-making capabilities. |

| Regulatory Compliance | Simplify monitoring and reporting to meet industry-specific regulatory standards such as FDA, ISO 9001, or HAACP. | Reduces the complexity of compliance and avoids penalties or legal action. |

Pricing

QMS pricing can range from $5,000 to over $150,000 per year, though it varies based on features, intended use case, and deployment model:

| Tier | Company Size | Total Cost of Ownership (TCO) | Example Software |

|---|---|---|---|

| Low-Tier | 1–25 employees | $5,000–$15,000 per year | Qualio, QT9 QMS |

| Mid-Tier | 25–150 employees | $20,000–$50,000 per year | Greenlight Guru, Intellect QMS |

| High-Tier | 150–500 employees | $50,000–$150,000 per year | MasterControl, Octave Reliance |

| Enterprise | 500+ employees | $150,000+ per year | Pilgrim SmartSolve, Veeva Vault QMS |

Overall, exact pricing depends on several factors, including:

- Total user count

- Additional integrations

- Required functionality

Generally, on-premise software has a one-time perpetual licensing fee, while a cloud platform includes a monthly or annual subscription. The one-time fee and setup often result in a higher cost of entry, while SaaS can be more expensive over time.

Integrating QMS & ERP

QMS incorporates information from across the enterprise, often found within larger ERP software. Because QMS systems typically operate best when they are taking a holistic approach to the enterprise challenge of optimizing quality, companies may need to manage integrations with other information systems, such as:

- Supply chain management software manages the provisioning of goods from suppliers to the customer. Relaying information regarding quality issues stemming from certain suppliers is an essential piece of the quality management puzzle.

- Customer relationship management software programs provide a coordinated approach to capturing customer-specific information. Order histories and other customer interactions are logged into CRM programs. The ability to flow quality issue information from CRM software to QMS programs is essential. It can assist in ensuring that quality issues addressed post-sale are being addressed in terms of service work and process improvement.

- Material requirements planning software manages the components needed for production and the processes that go into the manufacturing operations. Importing quality-related information from the QMS to the MRP system will help create more accurate manufacturing plans and forecasts.

Trends & Future Outlook

The global quality management software market, valued at $11 billion in 2024, is projected to reach over $20 billion by 2030 according to Grand View Research. This surge comes from rapidly developing tech like:

AI Technology and Automated Quality Control

AI-powered quality management systems are shifting from reactive to predictive control. They leverage machine learning to catch deviations in real time. As of 2025, around 40% of top-tier QMS tools now include AI analytics.

Cloud/SaaS vs. On-Premises Deployments

The cloud dominated the quality management software industry at 55% of total deployments in 2024, according to a Market Growth Reports article. Hosted cloud solutions provide multi-site access and scalability, though many regulated industries still require on-premises deployments for data sovereignty.

IoT Integration

The Internet of Things provides continuous monitoring of vital parameters. It auto-triggers non-conformance workflows when measurements exceed tolerances. This connectivity supports automated quality control where sensors record live data from production equipment.

Digitalized Compliance

Compliance management tools include audit-tested QMS templates pre-configured for ISO 9001:2015, industry-specific standards, and FDA regulations. These templates integrate with workflow management systems featuring role-based task lists that auto-assign quality activities to personnel based on their certifications.